Let’s start with basic notions: REC.601 and REC.709 are standards for encoding video in digital form; the former was used for SD (e.g. DVD, DVB) while the latter for HD (e.g. Blu-ray, HDTV); both are SDR (Standard Dynamic Range) where the maximum brightness are set to 100 nits; this is a legacy of old CRT time, where the technology did not allow higher brightness.

But technology evolved: from cathode ray tube to CCD and later CMOS for cameras, and from cathode ray tube to plasma and LCD for displays; the maximum possible brightness to be captured and showed started to increase to few hundred nits up to thousand nits and more, and with this the dynamic range increased from around six stops of CRT era to ten, twelve, fourteen stops and beyond.

Still, the standards limited the top brightness to mere 100 nits; using 8-bits, the legal allowed video levels range is 16-235, leaving the 0-15 to “below black” and 236-255 to “super white” – this limited range is used by almost all video formats.

So the HDR was invented to let more brightness to be shown on modern displays – to allow 35mm film scans and modern digital cameras to show their potential; it is now possible to encode video signals with up to 10000 nits brightness using Dolby Vision and HDR10+, even if most movies are encoded at 1000 nits and few at 4000 nits, as many home displays are limited to less than a thousand nits.

What about the movies (filmed in 35mm or digital it does not matter) converted and mastered in SDR? Are their brightness limited to 100 nits? Well, yes. And no. As analog film and digital camera sensors are able to capture between 12 and around 16 stops (in some cases even more), it means that the top brightness is way higher than 100 nits. So, when scanning film or converting DI (Digital Intermediate) to SDR-compatible masters, the highlights higher than 100 nits may be simply clipped (and so lost forever) or compressed into the 16-235 levels.

How? Let’s say that there is a movie with 1000 nits maximum brightness; you can assign level 235 to 1000 nits (instead of 100 nits), where 234 may be 900 nits, 233 may be 800 nits and so on (note: this is just an example to give you an idea). At the end, you have all the dynamic range of the master, compressed into an SDR 8 bit limited range video.

Problem is, how to decompress it properly? Frankly, I don’t know… what I know is that modern HDTV can (and often do) show SDR video at much higher brightness than 100 nits, but doing so compromise the intentions of the content creators in any case.

Best solution? Watch the HDR version if available. But even when it’s available, sometimes SDR version may have something better – like bigger frame size and/or color grading. What if someone wants to make a fan restoration project using SDR as a source? Is it limited to SDR release only? I’ve discovered that it’s possible to get the best of both – bigger frame size and/or color grading of SDR (HD or SD) along with better definition and/or better highlights of HDR UHD.

This is the simplified process flow:

- convert the SDR source to HDR, getting the SDR-to-HDR converted version

- regrade SDR-to-HDR version as HDR, to recover most or all highlights (if present)

- overlay the HDR luma to SDR-to-HDR chroma, to get the best result

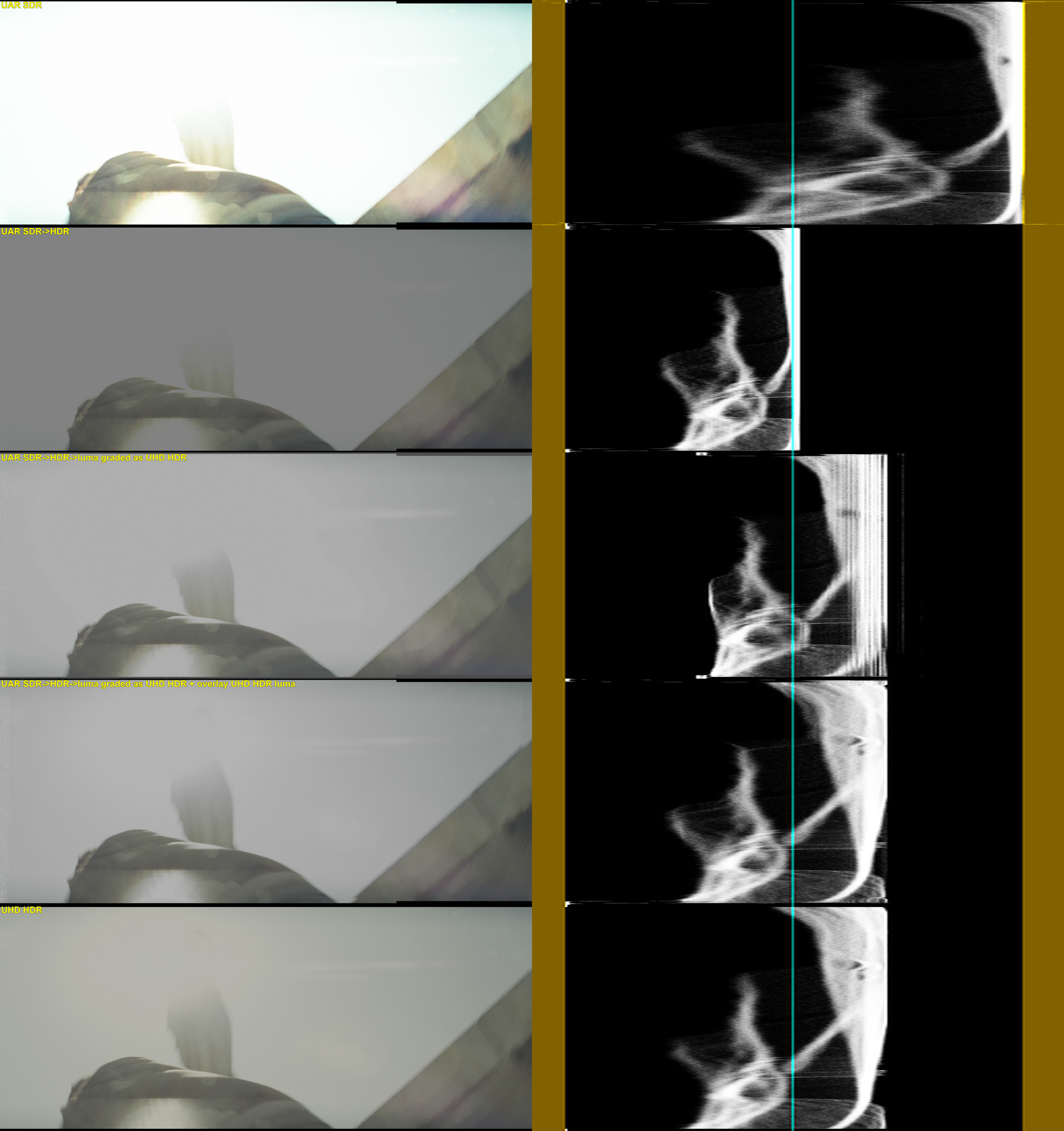

Following are examples from Transformers (2007) – from top to bottom:

- UAR SDR – made using two letterbox sources (HD-DVD and Blu-ray) and one open-matte-but-cropped version (HDTV)

- UAR SDR->HDR – converted taking in account maximum brightness set to 100 nits

- UAR SDR->HDR->luma graded as UHD HDR – regrading UAR SDR->HDR using UHD HDR as reference, but using only its luma

- UAR SDR->HDR->luma graded as UHD HDR + overlay UHD HDR luma – overlaying UHD HDR luma only to UAR-SDR->HDR, maintaining SDR colors

- UHD HDR – untouched UltraHD Blu-ray

Notes – in no particular order:

- UAR SDR->HDR highlights, as they are limited to only 100 nits, are less defined – dramatically in some instances as seen in the first comparison, where light is simply a blob and borders are not there compared to UHD HDR

- in some cases, UAR SDR->HDR->luma graded as UHD HDR is very close to UHD HDR

- color difference between the UAR converted to HDR and the UHD HDR is subtle when watched on an SDR monitor, but is there, and I like it more than UHD

- UAR frame size is just a little bit bigger than UHD – even if in few case is up to around 6%; it may seem not a lot, but to me every pixel counts!

- luma in each third “step” is not a simple “stretch” of the second; I’m pretty sure is it possible to get a similar result using a logarithmic curve, but I don’t know how to obtain that, and if it may apply to any SDR-to-HDR conversions

Recent Comments